Python comparison - JIT, CUDA and DASK

Python has become the language of choice for data scientists and data analysts. It is easy to use with a lot of analytical support libraries. Python programs aren't particularly fast. This has driven people to create a set of tools that help Python programs scale-up and scale-out.

We can compare approaches with a simple program that I adapted to JIT, GPU, and distributed computing.

We can compare approaches with a simple program that I adapted to JIT, GPU, and distributed computing.

Demonstration Caveat

Distributed computing works best when there are problems with lots of I/O that can be spread across workers. This program has no I/O and a high data transfer to computation ratio.

GPUs have a high cost for data load and unload data with GPU memory. This means they work best when there is a significantly higher computation-to-data transfer ratio than this program has.

Demonstration

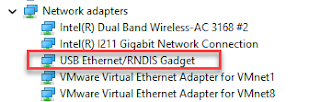

The sample Python program creates 10,000,00 3 variable rows that it then uses as inputs for 10,000,000 iterations of log(x)*log(y)*log(Z). The results are returned in a 10,000,000 long result array. The times for the various options are shown in the following drawing.- Non-JIT: is the default execution model for a standard Python program.

- JIT: represents the Numba JIT compiled code.

- JIT Parallel: represents Numba JIT compiled code where iterating loop methods have been marked for JIT auto-parallelization if possible. In the test app, this means the 10,000,000 long iteration loop is spread across as many worker threads as it can.

- GPU: moves computational code from the CPU to the NVIDIA GPU. The 10,000,000 calculations are distributed across the GPU's 2,000 CPUS. Computation iterator loops are replaced with a mapping of the calculation across the 2000+ GPU cores. The mapped functions execute on the cores until all are done. The results are aggregated by collecting the data from GPU memory.

- DASK: distributes computational work across a set of threads either in process or on DASK worker processes. The 10,000,000 input values are allocated across the worker cluster. Remote task invocation causes each of the worker nodes to iterate across the data allocated to that DASK worker thread. The results are aggregated by collecting data from each worker node.

The Parallel JIT is the easiest to implement with simple annotations. Parallel JIT changes the least amount of code and can provide the highest single-machine performance. GPU and DASK overtake Just JIT if provided larger or more complex calculations.

|

| Test run results |

Comments

Post a Comment