Demonstrating Docker on Raspberry Pi is more than a party trick.

Big pieces of the 2018 Microsoft Build conference were about applied machine models and secure IOT. One of the keynote demos was called "Scott or Not" where a Raspberry PI used a machine learning vision model to determine if the person in front of a camera "looked like Scott". Some of the most interesting parts of the demo were not obvious without a both demo later in the day.

Hobbyist are often ok with a hand crafted build using manual script instructions. That approach doesn't work in a commercial environment with 100s or 1000s of units. Microsoft took a more enterprise approach by creating a modular demo that supported easier automation. The demonstration code is organized in a modular fashion using Docker images. Individual functions of the pipeline are isolated to their own containers. This makes it possible to update tools, languages and code without any updates to the core system.

The diagram on the right shows the flow for the vision recognition example program demonstrated at MS Build 2018.

The machine vision model was generated in Azure and bundled into the application so that it can be run "offline" from Azure. The model can be updated in Azure by feeding it additional images. The model loaded onto the R-Pi can be updated from Azure.

The diagram on the right shows the flow for the vision recognition example program demonstrated at MS Build 2018.

The machine vision model was generated in Azure and bundled into the application so that it can be run "offline" from Azure. The model can be updated in Azure by feeding it additional images. The model loaded onto the R-Pi can be updated from Azure.

You can find the source code for Raspberry Pi or Windows 10 on GitHub. Docker on Windows does not support device access which means the MS Build 2018 Windows code is more of a conceptual demonstration.

Hobbyist are often ok with a hand crafted build using manual script instructions. That approach doesn't work in a commercial environment with 100s or 1000s of units. Microsoft took a more enterprise approach by creating a modular demo that supported easier automation. The demonstration code is organized in a modular fashion using Docker images. Individual functions of the pipeline are isolated to their own containers. This makes it possible to update tools, languages and code without any updates to the core system.

Demonstration Flow

The diagram on the right shows the flow for the vision recognition example program demonstrated at MS Build 2018.

The diagram on the right shows the flow for the vision recognition example program demonstrated at MS Build 2018. - Take a picture using the embedded camera

- Save the picture it to disk.

- Evaluate the picture to determine if it "is-Scott"

- Post the results to twitter

- Display the results to the attached LED "hat"

You can find the source code for Raspberry Pi or Windows 10 on GitHub. Docker on Windows does not support device access which means the MS Build 2018 Windows code is more of a conceptual demonstration.

Modular Deployment with Docker

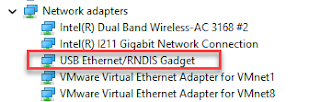

The Microsoft Build 2018 hardware environment consisted of a Raspberry Pi 3, a camera, a Sense Hat hardware LED board. The software environment Azure IOT Hub, Python, C# scripting environment and the device drivers for the camera and Sense Hat.

Container-to-container communication is done over HTTP.

- The Startup Container repeats the process of

- Take a picture

- Call the Custom Vision Container over HTTP

- The Custom Vision Container is responsible for analyzing the image.

- It runs an image recognition model against the passed image. This vision model was created in Azure and built into the Docker image.

- The Custom Vision Container invokes the Azure Functions container via HTTP.

- The Azure Functions Container is responsible for informing display and notification components of the vision analysis results.

- It posts the information to Twitter using Azure APIs.

- The Azure Functions Container calls the Startup Container , via HTTP, informing it of the results.

- The Startup Container displays the match/no-match icon on the Sense Hat LEDs.

The demonstration exposes those ports publicly which should make it easier to inject test data into various pipeline steps.

Future Enhancements?

This program demonstrates Machine Vision in a distributed environment using IOT Hub to manage remote IOT devices. It is a demonstration application created in a time crunch. The startup container mixes the concerns of initiating the process, taking the picture and displaying the analysis results. Those responsibilities should be somehow isolated from each other possibly splitting that container from one into two or three.

Maybe demonstration enhancements would be a good conference lab session?

Fini

Started: 2018 05 25

Comments

Post a Comment