Multi-Node Kubernetes with KIND and Docker Desktop

You can run multi-node Linux Kubernetes clusters with full Linux command line

support using the KIND project for Kubernetes. Lets walk through how you can set up a multi-node Kubernetes cluster on a single machine as a learning environment and CI/CD testing environment.

Video

Windows 10 - WSL2 - Docker

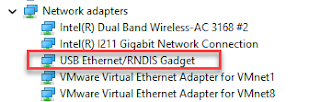

The best way to run Linux Docker containers on Windows 10 is with the WSL2

integration. Docker will offer to enable WSL2 integration as part of its' installation if you are

running a late enough version of Windows 10.

All of the commands are the same from the windows prompt or from a unix prompt. Open a Linux/Unix prompt if you have WSL2 or are running this on a mac. Open a GIT Bash prompt on Windows with Docker without WSL2

Install, Enable and Verify

|

|

| Verify Kubernetes isn't already running $ kubectl cluster-info To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'. Unable to connect to the server: EOF |

|

| Install Kind in WSL2s linux using this script. Copy and paste it into your terminal window cd /tmp |

|

| Verify kind is installed. $ kind --version kind version 0.10.0 |

|

| Create a multi-node Kubernetes cluster named "dev" running virtual nodes as containers in a Docker Desktop instance. Run this file as a bash script. It creates the cluster and changes the current cluster context is changed to kind-dev # Create a config file for a 3 nodes cluster cat << EOF > 3workers.yaml kind: Cluster apiVersion: kind.x-k8s.io/v1alpha4 nodes: - role: control-plane - role: control-plane - role: worker - role: worker - role: worker EOF # Create a new cluster with the config file # the context will be kind-dev kind create cluster --name dev --config ./3workers.yaml # Check how many nodes it created |

|

| The kind create cluster output should look something like $ kind create cluster --name dev --config ./3workers.yaml Creating cluster "dev" ... ✓ Ensuring node image (kindest/node:v1.20.2) 🖼 ✓ Preparing nodes 📦 📦 📦 📦 📦 ✓ Configuring the external load balancer ⚖️ ✓ Writing configuration 📜 ✓ Starting control-plane 🕹️ ✓ Installing CNI 🔌 ✓ Installing StorageClass 💾 ✓ Joining more control-plane nodes 🎮 ✓ Joining worker nodes 🚜 Set kubectl context to "kind-dev" You can now use your cluster with: kubectl cluster-info --context kind-dev Have a nice day! 👋 |

|

| Verify the cluster-info. We should see both master and DNS servers $ kubectl cluster-info --context kind-dev Kubernetes master is running at https://127.0.0.1:32768 KubeDNS is running at https://127.0.0.1:32768/api/v1/namespaces/kube-system/services/kube-dns:dns/proxy To further debug and diagnose cluster problems, use 'kubectl cluster-info dump'. |

|

| Verify the cluster has 3 worker nodes and 2 control plane nodes $ kubectl get nodes NAME STATUS ROLES AGE VERSION dev-control-plane Ready master 3m45s v1.20.2 dev-control-plane2 Ready master 3m16s v1.20.2 dev-worker Ready <none> 2m16s v1.20.2 dev-worker2 Ready <none> 2m17s v1.20.2 dev-worker3 Ready <none> 2m19s v1.20.2 |

|

| See that the 5 node Kubernetes cluster is actually implemented as 5 containers plus an haproxy container $ docker ps CCONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES 02a410c37431 kindest/haproxy:v20200708-548e36db "/docker-entrypoint.…" 25 minutes ago Up 25 minutes 127.0.0.1:44627->6443/tcp dev-external-load-balancer

79f3b52bceb9 kindest/node:v1.20.2 "/usr/local/bin/entr…" 25 minutes ago Up 25 minutes dev-worker

2b9c52767f50 kindest/node:v1.20.2 "/usr/local/bin/entr…" 25 minutes ago Up 25 minutes dev-worker2

2f9e27ba7ca9 kindest/node:v1.20.2 "/usr/local/bin/entr…" 25 minutes ago Up 25 minutes 127.0.0.1:40179->6443/tcp dev-control-plane

0b9df49f4739 kindest/node:v1.20.2 "/usr/local/bin/entr…" 25 minutes ago Up 25 minutes 127.0.0.1:45147->6443/tcp dev-control-plane2

a7a61e9e9418 kindest/node:v1.20.2 "/usr/local/bin/entr…" 25 minutes ago Up 25 minutes dev-worker3 | |

| Show existing Kubernetes contexts. Notice the default context here is kind-dev $ kubectl config get-contexts CURRENT NAME CLUSTER AUTHINFO NAMESPACE docker-desktop docker-desktop docker-desktop docker-for-desktop docker-desktop docker-desktop * kind-dev kind-dev kind-dev |

|

| See pretty much everything in all name spaces. $ kubectl get all --all-namespaces NAMESPACE NAME READY STATUS RESTARTS AGE kube-system pod/coredns-6955765f44-558dk 1/1 Running 0 5m15s kube-system pod/coredns-6955765f44-8kjvg 1/1 Running 0 5m15s kube-system pod/etcd-dev-control-plane 1/1 Running 0 5m24s kube-system pod/etcd-dev-control-plane2 1/1 Running 0 4m42s kube-system pod/kindnet-2r2pp 1/1 Running 0 3m58s kube-system pod/kindnet-6g4lg 1/1 Running 0 5m14s kube-system pod/kindnet-8kwjr 1/1 Running 0 4m kube-system pod/kindnet-mlhvw 1/1 Running 1 4m57s kube-system pod/kindnet-w94fc 1/1 Running 0 3m57s kube-system pod/kube-apiserver-dev-control-plane 1/1 Running 0 5m24s kube-system pod/kube-apiserver-dev-control-plane2 1/1 Running 0 3m57s kube-system pod/kube-controller-manager-dev-control-plane 1/1 Running 1 5m23s kube-system pod/kube-controller-manager-dev-control-plane2 1/1 Running 0 3m41s kube-system pod/kube-proxy-4vvl8 1/1 Running 0 4m57s kube-system pod/kube-proxy-dmbhv 1/1 Running 0 3m58s kube-system pod/kube-proxy-g2kn2 1/1 Running 0 3m57s kube-system pod/kube-proxy-x5hlf 1/1 Running 0 5m14s kube-system pod/kube-proxy-xxnbd 1/1 Running 0 4m kube-system pod/kube-scheduler-dev-control-plane 1/1 Running 1 5m23s kube-system pod/kube-scheduler-dev-control-plane2 1/1 Running 0 3m32s local-path-storage pod/local-path-provisioner-7745554f7f-582tj 1/1 Running 1 5m15s NAMESPACE NAME TYPE CLUSTER-IP EXTERNAL-IP PORT(S) AGE default service/kubernetes ClusterIP 10.96.0.1 <none> 443/TCP 5m35s kube-system service/kube-dns ClusterIP 10.96.0.10 <none> 53/UDP,53/TCP,9153/TCP 5m24s NAMESPACE NAME DESIRED CURRENT READY UP-TO-DATE AVAILABLE NODE SELECTOR AGE kube-system daemonset.apps/kindnet 5 5 5 5 5 <none> 5m16s kube-system daemonset.apps/kube-proxy 5 5 5 5 5 beta.kubernetes.io/os=linux 5m24s NAMESPACE NAME READY UP-TO-DATE AVAILABLE AGE kube-system deployment.apps/coredns 2/2 2 2 5m24s local-path-storage deployment.apps/local-path-provisioner 1/1 1 1 5m15s NAMESPACE NAME DESIRED CURRENT READY AGE kube-system replicaset.apps/coredns-6955765f44 2 2 2 5m15s local-path-storage replicaset.apps/local-path-provisioner-7745554f7f 1 1 1 5m15s |

API server and host access

The standard Docker Desktop Kuberentes endpoint doesn't work. You must instead use the kubectl proxy which makes the Kubernetes API server visible outside the cluster.

$ kubectl proxy Starting to serve on 127.0.0.1:8001 | |

| You can see the APIs available on the API server by pointing your browser at http://127.0.0.1:8001/ | |

Delete the kind-created Kubernetes cluster and all assets

| You can remove the cluster and everything else with $ kind delete cluster --name dev Deleting cluster "dev" ... |

|

| Verify the Kind managed Kubernetes nodes no longer exist. $ docker ps CONTAINER ID IMAGE COMMAND CREATED STATUS PORTS NAMES |

Resources

- KinD

- Other Links - ignore the minikube part

Changes

- May 16 2021 change version from 0.7 to 0.10 and container 1.17.x to 1.20.2

Comments

Post a Comment