Streaming Ecosystems Still Need Extract and Load

Enterprises move from batch to streaming data ingestion in order to make data available in a more near time manner. This does not remove the need for extract and load capabilities. Streaming systems only operate on data that is in the stream right now. There is no data available from a time outside of the retention window or from prior to system implementation.

There is a whole other set of lifecycle operations that require some type of bulk operations. Examples include:

- Initial data loads where data was collected prior or outside of streaming processing.

- Original event streams may need to be re-ingested because they were mis-processed or because you may wish to extract the data differently.

- Original event streams fixed/modified and re-ingested in order to fix errors or add information in the operational store.

- Privacy and retention rules may require the generation of synthetic events to make data changes that don't and can't originate in the original systems.

- Errors in processing in the original source systems may not be fixable in the original systems in a way that generates consumable correction events.

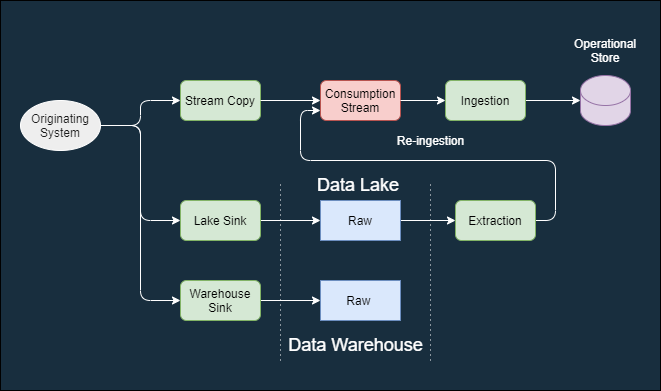

This diagram shows a typical ingestion stream that lands data into a target system ingestion process, a data warehouse, and a data lake.

- The top row represents ingesting a source stream into some type of operational store used by an application or other process.

- The middle row represents ingestion into a data lake like AWS Data Lake, Delta Lake, or Azure Data Lake. The target use case might be Machine Learning, legacy batch jobs, binary storage or others.

- The bottom row represents a data warehouse like Snowflake. The target use case might be human analytics, reports or data science exploration.

Re-ingestion is the route data takes when there needs to be an initial load, data fixes, reprocessing to pick up additional fields or other behavior that operates against historical data. Streaming cannot generally be used for this because streaming data is "right now" per it's nature.

Initial data loads and reprocessing can push data into the operational store by direct database operation or by synthesizing new events that are injected back into the event stream.

Video

Created 2021 June 09

Comments

Post a Comment