Event the heck out of it so that you can drive insights and and keep options open

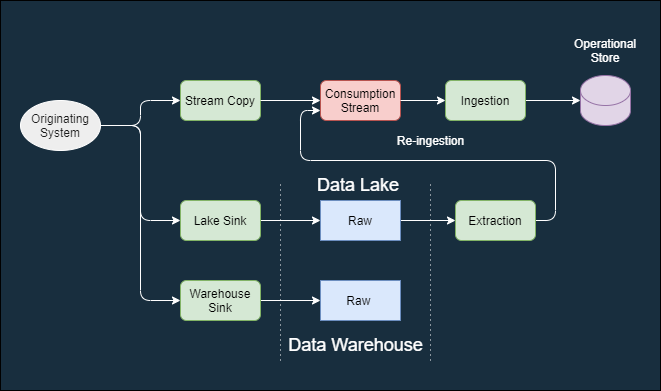

Business and Technical events are an early, easy, way to capture activity, notify other systems of activity, capture technical changes, and log executed business functions. The information needed for those events tends to reside in specific steps in an execution flow. This means we often need to insert event generation probes in multiple places and at multiple levels. Product owners need visibility into any business functions or services that are performed. Technical teams need visibility into the detailed activities executed in a system. Partner business functions and data stores need a way to reconstruct the data as it existed at a specific times Databases often only provide a current or point-in-time state. Logs have PII restrictions. Metrics are statistical by their nature.