Using OpenTelemetry to send Python metrics and traces to Azure Monitor and App Insights

Microsoft has updated its Python libraries that let us send 3rd party library invocation metrics and traces and application-specific custom metrics and traces to Application Insights. Their OpenTelemetry Exporters make it simple to route the standard Python OpenTelemetry library observations to Application Insights. You bootstrap the Azure config and credentials and then use standard OT calls to capture metrics and trace information.

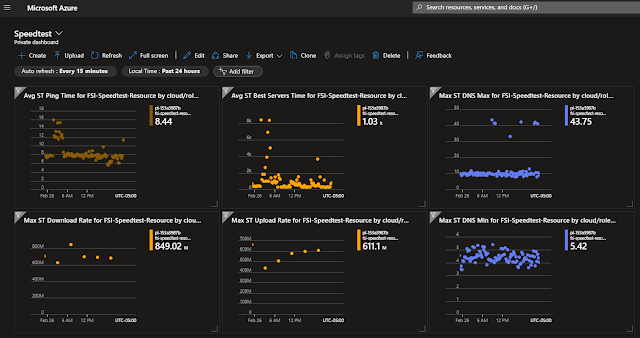

SpeedTest network Metrics in Azure using OpenTelmetry

A couple of years ago I wrote a program to record the health of my home internet connection and store that information in Azure Application Insights. I did this with a Python program that leveraged Microsoft's OpenCensus Azure exporter. That library is becoming obsolete with the move from OpenCensus to OpenTelemetry.

That project has now been ported to Open Telemetry! It involved 6 hours and 40 lines of code that was preceded by 20 hours of whining and internet surfing. The hard part was figuring out exactly what needed to change between OpenCensus and OpenTelemetry. It helped that the latest OT Python SDK added a synchronous Gauge that aligned with the previous OC API. It also helped that Microsoft's Azure SDK Python team recently released a simple starter function that handled most of the config with a single call and no exporter configuration. You may wish to do manual configuration for fine-tuning but configure_azure_monitor() was a great starting point. See the code on GitHub,

Automatic Instrumentation of Libraries vs Custom

The Python Open Telemetry library can easily add metrics and tracing probes to standard Python modules and libraries resulting in end-to-end call tracing across endpoints. The speedtest program is a batch job that is essentially using Azure Monitor as my operational dashboard. I only wanted custom metrics and tracing and did not want to capture any instrumentation injected by OpenTelemetry itself.

Configure OpenTelemtry and Azure exporters

The following code is a point-in-time snapshot of the of ApplicationInsights.py in the speedtest-app-insights GitHub Repository

I capture only my specific metrics and traces. This meant I had to disable the automatic integrations. This is done by setting environment variables. I do this from inside the Python program.

OpenTelemetry already captures my host name but I wanted to know which program or service generated the metrics. I set the AppInsights cloud role to be my program name as an environment variable by setting the service.name property in OpenTelemetry.

# call this if you want to send logs to Azure App Insight

# after this,

# every log(warn) will end up in azure as a log event "trace" !"tracing"

def register_azure_monitor(

azure_connection_string: str,

cloud_role_name: str,

capture_logs: bool = False,

) -> None:

# Cloud Role Name uses service.namespace and service.name attributes,

# it falls back to service.name if service.namespace isn't set.

# actually is concatenated ${service.namespace}.${service.name}

# Cloud Role Instance uses the service.instance.id attribute value.

os.environ["OTEL_RESOURCE_ATTRIBUTES"] = f"service.name={cloud_role_name}"

#

# Disable exporters by setting these variables to "none"

#

# Netchecks

# 7 items sent with or without integrations enabled

if not capture_logs:

os.environ[environment_variables.OTEL_LOGS_EXPORTER] = "none"

# NetChecks

# 4 traces , 6 if integrations are enabled

# os.environ[environment_variables.OTEL_TRACES_EXPORTER] = "none"

# NetChecks

# 3 metrics, 5 if integrations are enabled

# os.environ[environment_variables.OTEL_METRICS_EXPORTER] = "none"

#

# Upload and download operations involve multiple HTTP packets which

# are all captured as metrics, traces and logs if we leave

# the urllib integration enabled

os.environ["OTEL_PYTHON_DISABLED_INSTRUMENTATIONS"] = (

"azure_sdk,django,fastapi,flask,psycopg2,urllib,urllib3"

)

# not sure what value to put here

# os.environ[environment_variables.LOGGER_NAME_ARG] = "__name__"

configure_azure_monitor(

connection_string=azure_connection_string,

disable_offline_storage=True,

)

The main program has to call register_azure_monitor() on startup.

Azure Cloud RoleName and RoleInstance

Azure App Insights has standard metadata fields including the cloud_RoleName and the cloud_RoleInstance. These historical AppInsights fields are populated from specific OpenTelemetry properties.

- The cloud_RoleInstance represents the host or runtime container of the program. In my case, it is the hostname of my PC, my Raspberry Pi, or IOT device. I was OK with the default mapped in by OpenTelemetry and didn't have to override.

- The cloud_RoleName often contains the application name, function name, or process name. The default OpenTelemetry field value didn't work for me so I had to set the service.name environment variable that is picked up by OTel and then mapped into the AppInsight field of a different name.

Generating Metrics

Creating the OT meter

Metrics/gauges/counters are grouped under a meter. This function lets my various modules spen up a meter with the passed-in name

# Returns a meter that gauges can be connected to

def create_ot_meter(meter_name: str, azure_connection_string: str) -> Meter:

meter = metrics.get_meter_provider().get_meter(name=meter_name)

return meter

Create a Gauge for the meter

The speed test program captures server acquisition times, ping times, and upload/download speeds. I did all this in gauges tied to the same meter. This code creates the meter and gauges, one in this case.

This particular program

- Starts

- Runs a speed test

- Records the results of the test.

- Terminates

The new non-callback gauge is perfect for this.

meter = create_ot_meter(

meter_name="SpeedTest", azure_connection_string=load_insights_key()

)

# perf data gathered while running the test

get_servers_gauge = meter.create_gauge(

name="ST_Servers_Time",

unit="ms",

description="Amount of time it took to get_servers()",

)

Recording a value in a gauge without a callback

The gauge records server acquisition time. This program runs, measures, records, and exists. We imperatively set the gauge so we don't have to hang around waiting for callbacks.

get_servers_gauge.set(

amount=round(number=float(json_data["get_servers"]), ndigits=3),

attributes=run_attributes,

)

Generating Traces

A single run involved 3 to 5 steps. I wanted to capture the top-level span as a trace with 3-5 sub-spans so I could graphically see how much time was in each stage.

Creating a Tracer named after this program

This creates the root trace with the same name as this program.

# Returns an OpenTelemetry Tracer that is bound to Azure Application Insights

def create_ot_tracer() -> Tracer:

# Get a tracer for the current module.

tracer = trace.get_tracer(__name__)

return tracer

Generating a Custom Trace

Here we record a nested tracer span. The outer span time will include the entire runtime for all the spans inside of it. This code shows 1 outer span with 4-5 inner spans

# Nested Tracing spans will be children to this one

with tracer.start_as_current_span(name="main"):

# getting the servers does a ping

s = speedtest.Speedtest(secure=1)

with tracer.start_as_current_span(name="get_servers"):

retrieved_servers = s.get_servers(servers=servers)

with tracer.start_as_current_span(name="get_best_servers"):

retrieved_best_server = s.get_best_server(servers=servers)

with tracer.start_as_current_span(name="measure_download"):

s.download(threads=threads)

with tracer.start_as_current_span(name="measure_upload"):

s.upload(threads=threads)

if should_share:

with tracer.start_as_current_span(name="sharing_is_caring"):

s.results.share()

Speedtest application flow

The program captures metrics and traces for a network measurement application. This flow is described in the docs on GitHub

Video

GitHub

This sample code comes from freemansoft speedtest-app-insights github repo

References

The primary references used in converting this app from OpenCensus to Open Telemetry

- https://learn.microsoft.com/en-us/azure/azure-monitor/app/opentelemetry-enable?tabs=python

- https://learn.microsoft.com/en-us/azure/azure-monitor/app/opentelemetry-python-opencensus-migrate?tabs=aspnetcore

- https://learn.microsoft.com/en-us/azure/azure-monitor/app/opentelemetry-add-modify

- https://learn.microsoft.com/en-us/azure/azure-monitor/app/opentelemetry-configuration

Azure Monitor OpenTelemetry SDK for Python

- https://learn.microsoft.com/en-us/python/api/overview/azure/monitor-opentelemetry-exporter-readme?view=azure-python-preview

- https://opentelemetry-python.readthedocs.io/en/latest/sdk/environment_variables.html

Revision History

Created 2024 02

Comments

Post a Comment