Building a development cloud using nested virtualization.

This article is not for you if you are happy developing/testing with at most two machines, your developer machine and a machine under test. It is not for you if you do all your product evaluation, training and test can only be done in Azure or some other cloud environment that doesn't support nested virtualizaiton.

This is really about building your own virtual labs or data centers to simulate larger installations or to use as training environments. Microsoft applications or machine clusters often include Active Directory, a database and some application servers.

This is really about building your own virtual labs or data centers to simulate larger installations or to use as training environments. Microsoft applications or machine clusters often include Active Directory, a database and some application servers.

You can some times install all this on single machines. Multiple machines make sense if you are working on clustering or wish to leverage portions of your setup for future projects.

The normal "build a couple virtual machines on my dev machine or server" makes for muddy system diagram for the machines under test. They are dependent on each other and on the host machine is also used in a non server type of way possibly as a development or monitoring machine. It is hard to simulate to separate data centers in this configuration since the main host machine can't be "cloned" to the 2nd environment.

The normal "build a couple virtual machines on my dev machine or server" makes for muddy system diagram for the machines under test. They are dependent on each other and on the host machine is also used in a non server type of way possibly as a development or monitoring machine. It is hard to simulate to separate data centers in this configuration since the main host machine can't be "cloned" to the 2nd environment.

We'd really like to take a "micro cloud" or virtual data center that can be moved from machine to machine leaving unmodified. This implies that all of the machines reside inside a virtual container that can be cloned or moved. We can clone as many class or lap clusters as we need by cloning the virtual container.

We'd really like to take a "micro cloud" or virtual data center that can be moved from machine to machine leaving unmodified. This implies that all of the machines reside inside a virtual container that can be cloned or moved. We can clone as many class or lap clusters as we need by cloning the virtual container.

VMWare has pretty much always supported nested virtual machines using they lightweight Hypervisor. This is the lowest resource way of handling the problem. It won't work in this case because we have Microsoft provided VHDs that have hard Hyper-V dependencies.

VMWare has pretty much always supported nested virtual machines using they lightweight Hypervisor. This is the lowest resource way of handling the problem. It won't work in this case because we have Microsoft provided VHDs that have hard Hyper-V dependencies.

Many Microsoft example machines come in VHD format. They can have unexpected VM dependencies that force a Hyper-V virtual host into the mix. Microsoft Hyper-V doesn't support nested virtual machines making our target configuration impossible in a Hyper-V only environment.

Many Microsoft example machines come in VHD format. They can have unexpected VM dependencies that force a Hyper-V virtual host into the mix. Microsoft Hyper-V doesn't support nested virtual machines making our target configuration impossible in a Hyper-V only environment.

VMWare supports nested virtual host environments and also suports Windows Server Hyper-V as a guest machine. This means We can run VMWare virtualization

VMWare supports nested virtual host environments and also suports Windows Server Hyper-V as a guest machine. This means We can run VMWare virtualization

on our server or development machine with a Windows Server Hyper-V host inside it. You could add one more layer of VMs by using another layer of VMWare Hypervisor.

We can slightly simplify this diagram when running

this on a developer workstation if we assume that each micro-cloud Hyper-V host can run in its own virtual host. Replace the outside hypervisor with a "virtualization environment". We run Windows Guest machines inside a Windows Server Hyper-V host inside a Windows virtual environment.

The virtual-lab / micro-center environment works because the internal part of the network always looks the same no matter where the outer host moves to. The external network can be DHCP with no reverse DNS capability.

The virtual-lab / micro-center environment works because the internal part of the network always looks the same no matter where the outer host moves to. The external network can be DHCP with no reverse DNS capability.

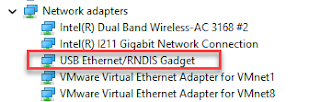

I usually have two network switches, one internal and one external. I then bind the various network adapters to those two switches to get networking behavior I want.

The Hypervisor is a thin container with no higher level business functions. You don't add or remove features.

The Hypervisor is a thin container with no higher level business functions. You don't add or remove features.

Active Directory servers are guest systems like any other guest in all Hypervisor hosted cloud. This provides a very regular pattern making the network look like a network of physical machines

Hyper-V servers can be treated like a Hypervisor, hosting no higher level functions.

Hyper-V servers can be treated like a Hypervisor, hosting no higher level functions.

I often add Active Directory and DNS Features to the Hyper-V host making that my "hub" machine. My lab environments are often small enough that I don't need a sole purpose AD machine.

You are paying the fully loaded cost of running a Windows Server to host the virtual machines in terms of, attack vectors, patching, disk space and CPU. You might as well add network/administrative functions to the host that are not true parts of your application.

This is really about building your own virtual labs or data centers to simulate larger installations or to use as training environments. Microsoft applications or machine clusters often include Active Directory, a database and some application servers.

This is really about building your own virtual labs or data centers to simulate larger installations or to use as training environments. Microsoft applications or machine clusters often include Active Directory, a database and some application servers. You can some times install all this on single machines. Multiple machines make sense if you are working on clustering or wish to leverage portions of your setup for future projects.

Microsoft often provides VHDs for some of their more complicated products that save you configuration time. These machines are often more interesting when integrated into to some type of application including AD databases or other tools. I've used their TFS and SCOM evaluation servers. I always needed ActiveDirectory and some other machines to monitor, build or otherwise build out my micro data center.

Virtual Data Center Concept

The normal "build a couple virtual machines on my dev machine or server" makes for muddy system diagram for the machines under test. They are dependent on each other and on the host machine is also used in a non server type of way possibly as a development or monitoring machine. It is hard to simulate to separate data centers in this configuration since the main host machine can't be "cloned" to the 2nd environment.

The normal "build a couple virtual machines on my dev machine or server" makes for muddy system diagram for the machines under test. They are dependent on each other and on the host machine is also used in a non server type of way possibly as a development or monitoring machine. It is hard to simulate to separate data centers in this configuration since the main host machine can't be "cloned" to the 2nd environment. We'd really like to take a "micro cloud" or virtual data center that can be moved from machine to machine leaving unmodified. This implies that all of the machines reside inside a virtual container that can be cloned or moved. We can clone as many class or lap clusters as we need by cloning the virtual container.

We'd really like to take a "micro cloud" or virtual data center that can be moved from machine to machine leaving unmodified. This implies that all of the machines reside inside a virtual container that can be cloned or moved. We can clone as many class or lap clusters as we need by cloning the virtual container.

This lets us create a portable micro data center that stays coherent and intact across copy and move operations.

N Level Virtual Nesting

The above diagrams describe a minimum of 3 levels of virtual containers and machines. This implies that we want to run nested virtualized environments.

- The container that hosts the Virtual Lab environment.

- The inner host representing a Virtual Lab instance, a container that contains all of the virtual guest machines for a given environment.

- The guest machines themselves that do all the work.

VMWare has pretty much always supported nested virtual machines using they lightweight Hypervisor. This is the lowest resource way of handling the problem. It won't work in this case because we have Microsoft provided VHDs that have hard Hyper-V dependencies.

VMWare has pretty much always supported nested virtual machines using they lightweight Hypervisor. This is the lowest resource way of handling the problem. It won't work in this case because we have Microsoft provided VHDs that have hard Hyper-V dependencies. Many Microsoft example machines come in VHD format. They can have unexpected VM dependencies that force a Hyper-V virtual host into the mix. Microsoft Hyper-V doesn't support nested virtual machines making our target configuration impossible in a Hyper-V only environment.

Many Microsoft example machines come in VHD format. They can have unexpected VM dependencies that force a Hyper-V virtual host into the mix. Microsoft Hyper-V doesn't support nested virtual machines making our target configuration impossible in a Hyper-V only environment. VMWare supports nested virtual host environments and also suports Windows Server Hyper-V as a guest machine. This means We can run VMWare virtualization

VMWare supports nested virtual host environments and also suports Windows Server Hyper-V as a guest machine. This means We can run VMWare virtualizationon our server or development machine with a Windows Server Hyper-V host inside it. You could add one more layer of VMs by using another layer of VMWare Hypervisor.

We can slightly simplify this diagram when running

this on a developer workstation if we assume that each micro-cloud Hyper-V host can run in its own virtual host. Replace the outside hypervisor with a "virtualization environment". We run Windows Guest machines inside a Windows Server Hyper-V host inside a Windows virtual environment.

Infrastructure: Networking

The virtual-lab / micro-center environment works because the internal part of the network always looks the same no matter where the outer host moves to. The external network can be DHCP with no reverse DNS capability.

The virtual-lab / micro-center environment works because the internal part of the network always looks the same no matter where the outer host moves to. The external network can be DHCP with no reverse DNS capability.I usually have two network switches, one internal and one external. I then bind the various network adapters to those two switches to get networking behavior I want.

Stable Private Networking

- The internal network addresses are either fixed IP or retrieved from a DHCP server that is on the same internal network. The machines cannot grab their internal network addresses from a server outside the network.

- The internal network has its own DNS so that all network lookups inside the virtual cloud always resolve no matter what happens outside the environment. One of the machines should be a DNS server. I usually make the AD server act as the DNS server.

Accessing the Outside World

Machines can operate in a totally isolated environment or they can have access to the internet for updates and other functions. You have a couple choices for providing outside access..- Provide each guest VM an external network address.

- Designating one of the machines to act as a gateway for the others.

- Use the virtualization container as a gateway.

Infrastructure: Active Directory

Active Directory is the primary system, user, DNS and network configuration component. Every environment should have an Active Directory server or have access to an Active Directory server. Developers often don't have enough access to corporate Active Directory to set up virtual labs and micro clouds. All worker, application or business applications run on guest machines in both configurations. The Hypervisor is a thin container with no higher level business functions. You don't add or remove features.

The Hypervisor is a thin container with no higher level business functions. You don't add or remove features.Active Directory servers are guest systems like any other guest in all Hypervisor hosted cloud. This provides a very regular pattern making the network look like a network of physical machines

Hyper-V servers can be treated like a Hypervisor, hosting no higher level functions.

Hyper-V servers can be treated like a Hypervisor, hosting no higher level functions.I often add Active Directory and DNS Features to the Hyper-V host making that my "hub" machine. My lab environments are often small enough that I don't need a sole purpose AD machine.

You are paying the fully loaded cost of running a Windows Server to host the virtual machines in terms of, attack vectors, patching, disk space and CPU. You might as well add network/administrative functions to the host that are not true parts of your application.

Comments

Post a Comment